Check out Takumi’s NEW English youtube channel🎵

↓↓↓

https://www.youtube.com/@takuway

A blog I've kept going for so long.

I think it might be time to wrap it up.

I'd gotten so used to this blog format over the years…

Going forward, I'm thinking of writing on note.

I'll practice while running both in parallel.

Even as things take yet another new shape — I hope you'll stick around!

Thank you!

A message from a friend that really hits home.

The bottom line: AI has become the greatest hacker in history.

The problem isn't security itself — it's not about being unbreakable, but about being prepared so that when you are breached, the damage stays minimal.

↓↓↓

Speaking of which — have you heard about Anthropic's recently announced Mitos and how extraordinarily powerful it is in the security space? Or the hacking incident two days ago, where AI was used to breach the internal systems of Vercel, a major server company?

In simple terms: Mitos already surpasses the world's top human security professionals. And the Vercel hack wasn't even done with Mitos — it was carried out using existing AI that performs below Mitos's level.

So what's the point of all this? I think security is something you will absolutely not be able to avoid — whether in the various businesses you develop going forward, or the ones you're already running. And honestly, it applies to anyone doing work on a computer, myself included.

So I asked ChatGPT about the future of security, including the Mitos situation, and got a lot of valuable insight — sharing it here.

↓↓↓

# Key Points to keep in mind when Launching New Businesses Going Forward

## The Core Premise

In the era ahead, the important mindset shift is away from "build something that can never be breached" and toward "build something that won't be fatally wounded when it is."

The reason: as AI evolves, the speed of exploration, automation, and volume of attacks on the offensive side increases — and the assumption that humans alone can defend against this becomes increasingly untenable.

---

1. What to Watch Out for First

1-1. Be clear about what your business is "holding"

The more of the following you hold, the higher the risk:

- Money

- Personal information

- Confidential information

- Authentication credentials

- API keys

- Payment information

- Access to production environments

- Customer behavior logs

- Customer files and data

- Authorization to connect to other services

Why it matters

The heavier what you're holding, the greater the loss from a single incident.

For example:

- Holding money → unauthorized transfers, refunds, liability, reputational damage

- Holding personal information → data leaks, legal response, customer churn

- Holding authentication credentials → cascading breaches across other services

- Holding production access → one breach can take down the entire service

What to keep in mind

In a new venture, start by minimizing "what you hold and how much of it."

---

1-2. Don't expand permissions just for the sake of convenience

Once you bring in AI and automation, it's tempting to design a system that can do everything.

But these are the dangerous ones:

- Auto-send

- Auto-payment

- Auto-delete

- Auto-post

- Auto-update

- Direct deployment to production

- Full access to customer accounts

Why it's dangerous

If anything goes wrong — with the AI, a connected service, or authentication credentials — the damage escalates immediately.

What to keep in mind

Don't make AI an "executor" from the start. Keep it limited to the following at first:

- Investigating

- Analyzing

- Proposing

- Detecting anomalies

- Drafting

- Requiring human approval

---

1-3. Design with accidents as a given from the start

Going forward, building on the assumption that "accidents won't happen" is itself dangerous.

Things to think through

- If breached, what breaks?

- How far does the damage spread?

- Can it be stopped quickly?

- Can it be restored quickly?

- Can you explain it to customers?

- Can the damage be contained locally?

The ideal state

- One feature failing doesn't stop the whole system

- One customer's incident doesn't spread to all customers

- One leaked API key doesn't bring down every function

- Mistakes can be undone

- Anomalies trigger immediate shutdown

---

2. Design Philosophies to Pay Particular Attention to Going Forward

2-1. Be mindful of "low custody"

Custody, put simply, is "the degree to which you're holding heavy things."

Low custody examples

- Analytics tools

- Report generation

- Visualization

- Decision support

- Knowledge organization

- Information provision

- Education

- Dashboards

- Business improvement support

High custody examples

- Payments

- Money transfers

- Wallets

- Authentication infrastructure

- Medical information management

- Large-scale personal data storage

- Customer file storage

- Automated production environment operations

- Cross-access to critical corporate data

Why it matters

Even at the same revenue level, high custody businesses tend to face losses of an entirely different magnitude when an incident occurs.

Conclusion

If you're starting small with a lean team, begin with "high value-add, low custody."

---

2-2. Don't build from scratch what doesn't need to be built from scratch

The danger is implementing difficult components yourself when they're not core to your business.

Particularly dangerous:

- Building authentication yourself

- Building payments yourself

- Building permission management carelessly

- Deprioritizing log infrastructure

- Building file storage carelessly

- Cutting corners on secrets management

- Skipping permission separation

- Deprioritizing backup and recovery

Why it's dangerous

Accidents happen in the hard parts that aren't your core value. And these kinds of accidents are extremely painful to fix after the fact.

Conclusion

For dangerous territory that isn't central to your value, lean as much as possible on established, proven systems.

---

2-3. Don't over-collect data

The old thinking was "the more data, the stronger you are."

Going forward, "not holding it is itself a strength."

Why it's dangerous

The more data you hold, the greater the damage when it leaks.

Especially dangerous:

- Raw personal information

- Identity verification information

- Payment-related information

- Unlimited storage of raw logs

- Mass storage of audio, images, and documents

- Customers' confidential information

- Access tokens for third-party services

Conclusion

Hold only the absolute minimum.

Keep retention periods short. If you don't need to hold it, don't.

---

3. What to Watch Out for in Development

3-1. "Add security later" is dangerous

This is becoming even more dangerous going forward.

Dangerous thinking:

- Build first, harden later

- It's an MVP, so minimal security is fine

- We'll revisit it when users increase

- We're not being targeted yet, so we're okay

- It's a small service, so it won't stand out

Why it's dangerous

As AI advances, even small services become subject to automated exploration and attack. The era is shifting from "targeted because you stand out" to "targeted mechanically because you have a vulnerability."

Conclusion

Even for an MVP, the following are non-negotiable from day one:

- Authentication

- Permission separation

- Logging

- Backup

- Secrets management

- Basic input validation

- Dependency library management

- Auditability

---

3-2. Don't give AI too much authority

Dangerous examples:

- AI freely reading and writing customer data

- AI directly updating production environments

- AI executing transfers or payments

- AI auto-sending emails

- AI auto-publishing articles or posts

- AI immediately executing deletions

Why it's dangerous

AI errors, manipulation, misrecognition, and connected-service incidents translate directly into serious accidents.

The safe approach:

- Read-centered

- Write operations require approval

- Dangerous operations require multi-step confirmation

- Publication and payments ultimately require human judgment

- Minimize data passed to AI

- Always maintain audit logs

---

3-3. Avoid structures where one mistake collapses everything

Dangerous structures:

- All authority concentrated in a single admin account

- All functions running on a single API key

- All customer data stored together in plaintext in one place

- Production, development, and testing not separated

- Refunds, deletions, sends, and updates executable under the same permissions

- External connection tokens held without limit

Conclusion

From the start:

- Separate

- Restrict

- Localize

- Make it reversible

These are essential.

---

4. What to Watch Out for as a Business

4-1. Don't make decisions based on revenue alone

Going forward, making business decisions purely on expected revenue is dangerous.

What to look at:

- Normal-state profit

- Security operation costs

- Incident response costs

- Legal and compliance costs

- Losses when an incident occurs

- Reputational damage costs

- Retention decline

- Customer support workload

- Need for insurance and audits

The essence

Even with large revenue, if one incident can wipe it all out — that's dangerous.

---

4-2. Low-price businesses tend to be higher risk

Why:

Security and operations carry fixed costs.

In other words, this combination is dangerous:

- Low unit price

- High customer volume

- Heavy support burden

- Large amounts of data held

- High potential for fraud

Conclusion

Going forward, "high value-add, premium pricing" tends to be safer than "cheap and high volume."

---

4-3. Trust becomes the most important asset

In the AI era, performance differences alone won't sustain a competitive advantage.

What remains is:

- Trust

- Safety

- Reliability

- Transparency

- The ability to respond when problems arise

Why:

The core capabilities of AI will continue to commoditize.

At that point, what differentiates is "this is a company I can trust."

---

5. Where Old-School Business Thinking Becomes Dangerous

5-1. "We're too small to be targeted" is dangerous

Automated exploration is advancing — whether you're targeted depends on your vulnerabilities, not your size.

5-2. "We can fix it when problems arise" is dangerous

The impact of a single incident is growing. Trust can be destroyed before you even get to fixing it.

5-3. "A little risk is fine if it's convenient" is dangerous

Giving AI and automation too much authority in the name of convenience means that when something goes wrong, the destructive power is too great.

5-4. "More data means more value" is dangerous

Going forward, simply holding data is itself a risk.

5-5. "Authentication, permissions, and auditing can wait" is dangerous

Fixing them later is extremely difficult — and early design flaws tend to linger for a long time.

5-6. "AI is safer than humans if you leave it to it" is dangerous

AI is powerful, but it is neither omnipotent nor flawless. Especially when given execution authority, the scale of accidents grows.

5-7. "Building everything in-house makes you stronger" is dangerous

A small team holding everything expands the surface area that needs defending. It means taking on dangerous territory that isn't core to your business.

5-8. "We'll get our systems in order once we've grown" is dangerous

Fixing things after growth means larger data volumes, more customers, broader impact — and the costs of both fixes and incidents skyrocket.

---

6. Core Principles Suited to the Era Ahead

Principle 1

Choose high value-add, low custody businesses

Principle 2

Use AI first as a proposer and analyst — don't make it too much of an executor

Principle 3

Don't hold dangerous territory you don't need to hold yourself

Principle 4

Minimize data, permissions, and secrets

Principle 5

Design with breaches as a given — plan how to stop, restore, and contain damage

Principle 6

Factor in potential losses from incidents, not just expected revenue

Principle 7

Build security operation costs into your pricing from day one

7. In One Line

What's dangerous in the era ahead is: "A business that's convenient, fast, and looks like it will grow — but holds money, secrets, and authority broadly, and can be finished off in a single incident."

What's strong, on the other hand, is: "A high value-add business that doesn't hold too much heavy responsibility, and is designed so that even if breached, it won't be fatal."

8. The Most Important Principle

What truly matters in business going forward is not "being unbreakable."

It's "not dying even when you are broken."

_______________________________

_______________________________

It's not that large companies get targeted — because AI can automate everything, individuals get hit just as thoroughly.

If there's a hole, it gets broken through.

Security is essential. No — what matters is building a state where being broken through is survivable.

The era of "please buy this"

and hard selling finally over?

Getting people to want it

Making them desire it.

But how?!

The common thread among people who don't want something is simply that they don't know it's useful — that it has value.

So then — educate them. Teach them.

What you share isn't the product or the service.

It's: What do they need? What are they looking for?

And so, when they encounter this service, this product — they want it naturally.

Selling without selling. That's education-based sales.

Nurturing

↓↓↓

■ Most commonly used terms

👉Pre-selling

= Shaping the other person's understanding, values, and expectations before you ever start selling in earnest

■ A slightly more essence-driven way to put it

👉Education-based Marketing

= Creating a state where people want it — by teaching them before you sell

■ From a coaching perspective-the Takumi way

👉 Goal-setting (pre-emptive mindset shift)

= Showing people their desired future before anything else

■ Other terms used by sales professionals

- Nurturing (customer cultivation)

- Indoctrination (ideological alignment) ※ a stronger framing

- Warming up (relationship building)

■ The essence in one line

👉"Creating the reason to want it — before you ever sell"

■ As a viral-worthy line

👉Sales aren't decided at the close.

"90% is already decided by the education that comes before it."

We're going to have a Claude seminar〜

"First Time with Claude" — Zoom Seminar Confirmed!

For those who've tried ChatGPT and Gemini but didn't feel anything change at work. The answer to that lingering frustration might just be Claude.

Takumi Yamazaki × Hajime Takanashi (Cognify LLC)

You've tried the AI tools, but…

✔️ You haven't been able to fully apply them to your actual work ✔️ The responses are close, but missing that extra layer of depth ✔️ It stays at the chat level — you haven't been able to build it into a real system

Tonight, we're sharing the solution.

So what makes Claude different?

Compared to ChatGPT and Gemini, Claude is known for:

💬 Understanding long, complex context with remarkable accuracy 🧠 Exceptional depth of reasoning and strength in text generation ⚙️ A design that lends itself naturally to real-world, practical use

But without knowing how to use it, even the best tool goes to waste.

In this seminar, Hajime Takanashi — who has led AI implementation across a wide range of industries — will walk you through the "Claude methods that actually work," live and in real time.

📋 Seminar Details

🗓 Date: Monday, May 5, 2026 (Public Holiday) — 18:00~ 🎥 Format: Zoom 🎤 Guest: Hajime Takanashi (Representative, Cognify LLC) 🏅 Host: Takumi Yamazaki

💰 Participation Fee 🎤 Zoom only: ¥2,200 📹 Archive only: ¥5,500 🎤📹 Zoom + Archive: ¥5,500

💡 Zoom + Archive is the same price as Archive alone — great value! Joining live is strongly recommended.

🎯 This seminar is for you if: ✅ You've already used ChatGPT or Gemini ✅ You want to use AI more deeply and broadly ✅ You're interested in streamlining your work and building systems ✅ You're curious about Claude but haven't tried it yet

👤 Profile

Hajime Takanashi | Representative, Cognify LLC A hands-on AI implementer who brings "AI that goes beyond the chat window" into real workplaces. From introducing AI agents into small and medium-sized businesses, to supporting grant applications and building high-volume content pipelines — he builds systems that actually run, across all industries. A frequent speaker at executive seminars and study sessions.

📌 Register here

https://takumiyamzaki.stores.jp/

And here I am again — writing about AI hacking issues and no-pressure sales methods on the blog.

This casual, just-throw-it-out-there quality was always one of the best things about blogging.

But once I start trying to put things together properly on NOTE — I'm a little worried I'll find it too much effort and just stop writing altogether.

This blog was always just a place to pour out whatever I was thinking, whatever I'd learned — and looking back, that was actually really good for getting my own head straight too.

That's what I realized.

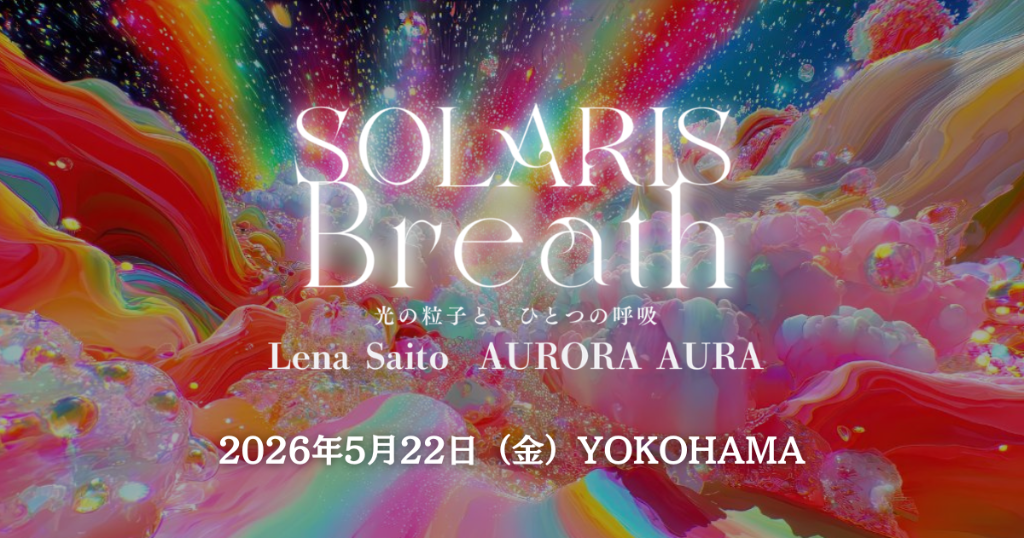

A friend is putting on a wonderful event in Yokohama!

↓↓↓

On May 22nd, at the Konica Minolta Planetarium in Yokohama — an immersive meditation experience, taking in art and sound beneath the stars.

↓↓↓

Lena Saito

Lena Saito | Musician / Frequency Researcher

A musician and frequency researcher specializing in "expanding consciousness through sound." She explores the relationship between sound and the body through collaborative research with neuroscientists, investigating how the rich overtones produced by rare vintage analog synthesizers act upon the brain and pineal gland.

I experienced kick boxing for the first time.

Gathering!

Thank you, everyone in Takamatsu!

I had an adjustment via the Lyre

A vibrational tuning instrument conceived by Steiner.

Thank you for preparing this in my room!

It's all flour-based, after all.〜

Thank you for your time!

Thank you!

Maybe this is just the kind of thing to post on note too!

Link to Takumi Yamazaki’s

ENGLISH Book “SHIFT”